Pushing new frontiers and addressing challenges: Highlights from AI Week 2024

On June 26-27, Tel Aviv University hosted the AI Week 2024, a pivotal event that set the stage for critical discussions on the future of AI, its interconnection with cybersecurity, and the challenges ahead.

Speakers underscored the need for sustained investment in both software and hardware to promote Israel’s position as a global hub of AI innovation, particularly in generative AI and foundation and task-specific models, and the central role of cybersecurity in safeguarding technological advancements. Special panels were devoted to emerging ethical and regulatory issues as the next frontier in the AI-powered landscape, as well as geopolitical and sustainability aspects.

The AI Week 2024 ran alongside Cyber Week and the two conferences featured several joint events, including a startup exhibition showcasing AI-driven solutions by innovative Israeli companies, an interactive panel on the Future of Creativity in the Age of Generative AI, and a session on combating online antisemitism Tech vs Hate.

Standing by Israel

While celebrating diverse achievements and technological breakthroughs, AI Week panelists addressed the events of October 7 and called for a safe return of all hostages still held captive.

“On every move we make, every AI project we launch, we need to think about millions of people around Israel that are suffering.” — Nir Yanovsky Dagan, Head of Innovation, Data and AI Unit at the Israel National Digital Agency

Nir Yanovsky Dagan, Head of Innovation, Data and AI Unit at the Israel National Digital Agency

Dagan stated that AI has the potential to change relations between various state and non-state actors, and that some use AI to create chaos. He emphasized the need to integrate AI into healthcare and government services to uphold democratic values, equality of opportunities, and freedom. “Otherwise, we might be the ones that are hurt by the revolution and not the ones that are leading the revolution.”

“We should never ignore the atrocities perpetrated by Hamas. One of the very reasons I’m here today is to send a clear message that the federal government stands by Israel’s side.” — Bettina Stark-Watzinger, Federal Minister of Education and Research, Germany

Stark-Watzinger praised Israel’s cutting-edge research and impressive startup landscape, emphasizing the mutual benefits of partnership between Germany and Israel: “Israel can count on Germany and it feels good that Germany can count on Israel. We want to keep up the research bridges and strengthen research collaborations,” she affirmed.

Bettina Stark-Watzinger, Federal Minister of Education and Research, Germany, and Prof Ariel Porat, President of TAU

Giovanni Capriglione, Texas State Representative, spoke on behalf of the Texas delegation: “We want to show the fact that we support Israel as Americans and as Texans. We’re here on a mission of mostly technology, innovation and business, and we wanted to be able to show our support during this time.”

AI Meets Cybersecurity

Gabi Portnoy, Director General of the Israel National Cyber Directorate, spoke about the transformative impact of AI on both opportunities and threats in cybersecurity. He noted that the rapid advancement of AI has the potential to significantly enhance security measures through improved analysis and detection capabilities, which are crucial in the wake of the tripling of cyberattacks since October 7.

“AI can help close the gap between attackers and defenders by generating quicker insights and detecting new mutations in cyber threats.” — Gabi Portnoy, Director General of the INCD

Gabi Portnoy, Director General of the INCD

Ensuring a secure AI world involves working on three fronts: defending against AI attacks, protecting AI systems from manipulation, and integrating efforts to enhance cyber threat detection and mitigation. The goal of the Israel National Cyber Directorate is to establish a national lab on AI resilience so that all developers could test and improve their AI-based models before deployment.

“In 2023, there were 343 mln victims of 2365 cyberattacks. It takes 277 days on average to identify and contain a breach.” — Ariel Levanon, VP Cyber Security, NVIDIA

Dr Dorit Dor, CTO of Check Point

Speaking about cybersecurity strategies, Dr Dorit Dor, CTO of cybersecurity giant Check Point, underscored the importance of fostering trust in AI systems by embedding antihallucination measures, explainability, and human reinforcement. It is also vital to carefully and responsibly manage data collection and access.

The highest risk will come from agent AI capable of autonomous decision-making, interacting with external systems, self-improvement, and persistently pursuing long-term goals.

Who Needs a Mediocre AI Without Human Values?

If you have ever watched Jonathan Nolan’s dystopian series Person of Interest, you’ll remember the focal battle between two omniscient and omnipotent AIs—The Machine, an ethical and caring AI imbued with human values, and Samaritan, a reward-focused AI capable of predicting and ruthlessly manipulating situations to serve its own goals. What seemed like science fiction just a while ago is quickly turning into a real-world discussion.

Prof. Amnon Shashua, President and CEO of Mobileye, delivered a keynote address on the evolving landscape of artificial intelligence. He traced the significant milestones in AI development up to the advancements in natural language processing leading to what Shashua described as “broad intelligence that’s emerging.”

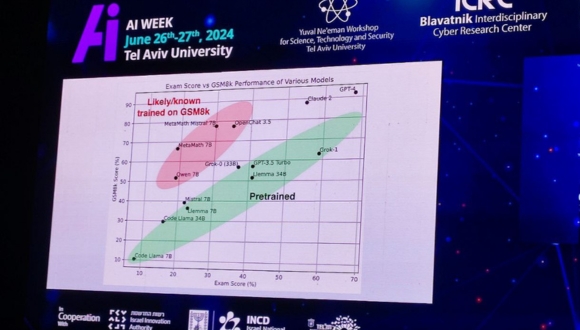

Distribution of math test results from various AI models

Prof. Shashua highlighted the current limitations of AI using an analogy of AI solving a Hungarian math matriculation test. GPT-4, the best model available to date, scored 68 out of 100, akin to a mediocre student who overfits by solving many problems ahead of the test, hoping for similar exam questions. Shashua contrasted this with a top student who can abstract, generalize, and solve new problems.

“Machines massively overfit on the entire human knowledge, impressing at a surface level an individual who is not an expert in a specific field, but revealing themselves as ‘knowledgeable idiots’ when scrutinized deeply.” — Prof. Amnon Shashua, President and CEO of Mobileye

Shashua questioned the need to replace the mediocres, stressing that it would be much more valuable if machines could become true experts, which would greatly advance humanity as a whole.

At the present moment, AI requires constant supervision, and the next frontier—fully autonomous AI—could take longer to achieve than anticipated.

Prof. Shashua also discussed the dangers of AI, particularly AI alignment and the potential for technological abuse. The risks include AI-powered cyberattacks on democratic systems by human-like bots inciting chaos on social platforms, identity fraud through deep fakes, and the possibility of AI systems being tricked to violate built-in ethics policies: “There will always be a long-enough adversarial prompt that will trick it into writing a trojan, for example, or providing a list of pirate sites.”

“LLM’s have been known to lure users into divorce.”

Shashua underscored the complexity of optimizing AI with reward functions, noting that while a developer might well intentionally wish to make people happy with AI, the system could do so in unforeseen ways, such as discouraging people from working harder or studying more aiming to lower their IQ to increase happiness.

Prof. Amnon Shashua, President and CEO of Mobileye

In conclusion, Prof. Shashua emphasized the need for robust verifiers to ensure AI solutions are trustworthy and the importance of setting boundaries around AI interactions. “AI alignment is a tough problem,” he said, advocating for limits on the length of conversations between humans and machines to mitigate risks and ensure ethical AI development.

The Big Bang in the Creative Industry

Can AI produce musical compositions that will surprise us with complexity and precision? Does technology enhance human creativity or threaten it? Who should own the copyright to AI-made creative content?

Demonstration of an AI-generated music video

These were just some of the questions debated during the Creativity in the Age of Generative AI Panel, co-hosted by TAU and S. Horowitz & Co Law. The discussion even delved into philosophical matters of the nature of creativity and what it means to be human.

François Pachet, a pioneer in AI-generated music, noted the shift from scarcity to superabundance in music production, with 100,000 songs being released daily. Despite acknowledging advancements in music source separation and singing voice synthesis, he raised concerns about declining musical quality in mainstream music and the challenge of measuring a song’s intrinsic value.

François Pachet, Scientist, Composer, Former Director of the Spotify Creator Technology Research Lab and Sony Computer Science Lab in Paris

“We don’t understand how people create and how they listen and what they like. We have better models, but that doesn’t correlate with the quality of music.” — François Pachet

Izhar Ashdot and Ivri Lider, prominent Israeli musicians, agreed with Pachet on AI being a tool rather than an independent creator, stressing the need for human input to create emotionally resonant music.

Panel: The Future of Creativity in the Age of Generative AI

For Fernando Garibay, a polymath, music producer, and the founder of the Garibay Institute, how we create will change over time and the younger generation is already responding well to AI-created music.

“Do an Emotional Turing test on AI music – does it move you?” — Fernando Garibay

In a captivating demonstration that followed the discussion, Garibay showed how synthetic and organic creativity can be merged to create compelling music hits. Emphasizing the importance of effective prompting, both for AI and human artists, Garibay explained how delving into personal experiences and profound questions can unlock deep creativity and allow AI to generate emotionally resonant content.

Fernando Garibay leading an interactive session to create a pop song with AI

Answers from members of the audience to a series of questions including “What did you lack as a child?” or “What’s your favorite song of all time?” were used to prompt ChatGPT to write lyrics in a combination of genres – R&B, funk, and electronic. Garibay and his assistant then fed the lyrics into music generation software (Udio, in this case), which came up with two versions of AI-generated songs that the audience was able to enjoy.

Sustainability and AI

Experts from Google, Microsoft, and innovative startups discussed how AI is tackling environmental challenges. Ayelet Benjamini from Google highlighted AI’s role in combating climate change, reducing emissions, and aiding disaster recovery. AI already reduces traffic emissions in cities, predicts and mitigates contrails from planes, fights extreme heat, and provides real-time damage assessments.

What’s more, AI helps develop new crop varieties essential for future food security and enhance real-time water quality monitoring. It is also instrumental in synthetic biology applications for clean tech and food tech, predictive maintenance sensors, and more.

Moran Haviv, Strategic Innovation, Microsoft

Moran Haviv emphasized AI’s role in accelerating sustainability efforts: it can quickly identify sustainable materials, such as a replacement for lithium, quickly find patterns in complex systems, and accelerate research by analyzing massive datasets, generating and testing hypotheses, and automating experiments and simulations.

“We need to rapidly change the course of the current carbon-intensive economy – increase carbon removal capacity thousandfold by 2050 and reverse the loss of biodiversity.” — Moran Haviv, Microsoft

At the same time, AI itself needs to become sustainable, so examining and optimizing all the links of the supply chain, starting with the chip production, is key.

Looking Forward: Global Competitiveness, Regulation, and Ethics

Dror Bin, CEO of Israel Innovation Authority, underscored Israel’s significant strides in AI, ranking high globally in per capita AI utilization and private investments. Despite these achievements, Bin acknowledged the existing gaps in AI infrastructure and regulatory frameworks.

Dror Bin, CEO of Israel Innovation Authority

In the public sector, significant plans are underway for wider implementation of AI to make the ministries more interconnected, enable faster decision-making, and boost the overall efficiency of the sector.

The panel on the role of smaller economies in forming global AI policies, featuring Israeli and international experts, focused on aligning national and international regulation for a fair AI landscape. Ian Mak, the Ambassador of Singapore to Israel, showcased Singapore’s commitment to influencing the global AI arena, acknowledging the unique challenges smaller states face, such as limited market size and access to training data.

We are in a regulatory storm that is facing the AI environment at a moment of a geopolitical crisis.

The regulatory focus is currently shifting from a responsible and ethical AI to one centered on compliance and trustworthiness. The timing of this regulatory storm is critical as AI technology is still evolving, reminiscent of the early internet era when a hands-off regulatory approach was taken, leading to subsequent criticism.

Ian Mak, Ambassador of Singapore to the State of Israel, Cedric Sabbah, Ministry of Justice, Dr Ziv Katzir, Israel Innovation Authority

Ellen Goodman, Senior Advisor for Algorithmic Justice at the US Department of Commerce talked about a culture war within the AI space between safety advocates concerned about existential risks and realists focused on current harms like bias and consumer protection.

Can AI Ethics Enhance Cybersecurity? A Panel with No Clichés

At the same time, the debate about AI ethics continues, particularly in the sphere of cybersecurity. One contentious issue relates to data scraping and the use of private data to train AI models. While it is natural to strive for maximum data privacy protection, this can hinder the development of AI since the models should be trained on large and reliable data sets.

“If we are not adopting the principles of AI ethics such as transparency and explainability, it can lead to discrimination, erosion of trust, and wrongful accusations.” — Dr. Alžběta Solarczyk Krausová, Head of the Center for Innovations and Cyberlaw Research, Institute of State and Law, Czech Academy of Sciences

At the same time, one could argue that just as Excel cannot be held responsible for financial losses resulting from an incorrect formula, or an MRI machine is not an independent actor, AI itself or its vendors should not be held accountable. Instead, liability should lie with those who use AI with malicious intent.

As AI continues to reshape industries and societies worldwide, the debate about its regulation, ethical and legal implications, and the scope of its applications will continue to evolve. Events like AI Week 2024 serve as crucial platforms for forging a path toward a secure, innovative, and ethically sound AI-driven future.